Is Generative AI plagiarism free?

This post is also available in the following languages: Euskara, Español

The discussion about whether the results of generative Artificial Intelligence (AI) can be considered plagiarism has intensified as the technology has advanced. Generative AI, which includes models such as GPT (Generative Pre-trained Transformer) for text, DALL-E for images, among others, has the ability to create content that appears incredibly human in its execution. This ability raises legitimate questions about originality and authorship. To better understand this issue, it is useful to explore fundamental machine learning concepts, such as linear regression, and how phenomena such as overfitting can influence the perception that AI results are copies or direct derivatives of existing works.

Linear Regression: Fundamentals and Functioning

Linear regression is one of the most basic and widely used statistical techniques in machine learning to predict an output variable based on one or more input variables. The idea is to find the line (in the case of one input variable) or the plane/hyperplane (with multiple input variables) that best fits the data. Mathematically, this is achieved by minimizing the sum of the squared differences between the observed values and those predicted by the model.

The simplicity of linear regression makes it easy to understand and explain, but also limits its ability to handle complex relationships between variables. Despite this, the fundamental principles of linear regression extend to more advanced machine learning and AI techniques, such as neural networks, which are capable of modeling much more complex relationships across multiple layers and nodes (neurons).

Overfitting: Implications in Content Generation

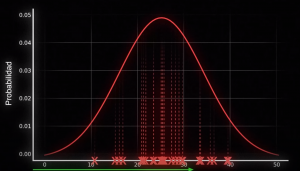

Overfitting occurs when a machine learning model fits the training data too well, to the point of capturing the noise or random fluctuations present in that data set instead of the underlying trends. As a result, the model may fail to generalize to new data, showing poor prediction or generation ability outside of its training set.

In the context of generative AI, overfitting can lead to models generating results that are extremely similar to the specific examples they were trained on, rather than producing new, original content. This is particularly relevant when considering the accusation of plagiarism. If an AI model produces works that are indistinguishable from existing works, it could be argued that it has “copied” those works, even though the underlying process is independent generation based on learned patterns.

Plagiarism and Generative AI: A Question of Originality

The question of whether generative AI results can be considered plagiarism centers on originality and authorship. An AI model has no intention or consciousness; it simply processes and generates content based on the data and algorithms that guide your learning. Generative AI creates new content from the vast amount of information and examples it was trained on, but these results may reflect specific styles, ideas, or even fragments of the training works.

The distinction between inspiration and direct copy is essential here. In the arts and sciences, inspiration from previous work is common and accepted practice. However, when the output of an AI model closely resembles an existing work without attribution, legitimate concerns about plagiarism arise. The key difference lies in how these cases are handled on a legal and ethical level, considering the limitations and capabilities of current technology.

The discussion about generative AI and plagiarism is complex and multifaceted. It involves not only understanding the technical foundations of machine learning, such as linear regression and the phenomenon of overfitting, but also broader questions of ethics, copyright and creativity. As we move forward, it is crucial to develop legal and ethical frameworks that recognize both the innovative capabilities of AI and the need to protect and respect intellectual property and copyright. The key will be to find a balance that encourages both innovation and fairness.